this is more of a question of will there ever be AI usage during the development of Haiku and if there is our plans to use it not to be integrated, but for a little bit of ideas, will everybody in the forum be notified about it or will this be a non-disclosed and due to the current state of how AI is progressing will Haiku ever use it in general for different purposes?

Hope not.

Some independant developers currently use AI to assist in application development. As long as there is disclosure and the source remains open I don’t see a problem with that. But I certainly do not want AI in our OS core.

I do not want Haiku listening to my every word, watching me through the camera or calculate how to get me to go to Home Depot. The MAIN thing I love about Haiku is that it has integrity. Technical and philosophical integrity. In fact it’s the only useable OS I know of that does.

It’s really difficult to say if it will be allowed in the future or not.

I personally hope it won’t,and I know that a few other developers are also against it,but there are also some in favor of it and looking into my crystal ball,I fear that some compromises may be made in the future.

Even in the current situation,AI generated code isn’t completely forbidden,but some rule states that it’s difficult to verify that it doesn’t validate any copyrights due to being trained on material with incompatible licenses,and that code where the copyright situation isn’t clear can’t be accepted.

I don’t think any AI code has made it into the Haiku source yet based on that rule.

My personal stance on this is that AI creates a lot more problems than it solves (does it even solve any?)

- AI server farms consume a lot of energy and water,resulting in higher energy costs for the people in the unfortunate situation to live near data centers,also it’s bad for the environment.

- AI is controlled by a few very big megacorporations that I prefer not to have anything to do with,it’s not something you can easily run on your own hardware in most cases.

- AI-generated code is often full of mistakes and fixing them can take longer than coding the thing yourself by hand.

- The knowledge how computers and programs work can get lost if you no longer need to know how to write code to create applications,thinking this further you may not even understand the code of your own application since you didn’t write it.

For those and many more reasons,I personally hope that the AI bubble implodes rather sooner than later and that the Haiku project stays clear of this questionable technology,made by even more questionable corporations.

But than again,my crystal ball doesn’t give me a clear answer whether this will be the case forever or if the situation changes some day.

One of the things I like about Haiku is the focus on personal craftsmanship in Haiku’s development culture; it’s frankly one of Haiku’s most endearing attributes.

So, while both of the previous responses are negative about having generative “AI” code or functionality in Haiku, and for legit reasons, in my opinion neither rejects “AI” strongly enough. ![]()

What kind of question is that? How is anybody supposed to answer that? It would be a different thing if you asked about the current situation (it was discussed and explained in several threads already), but noboby knows what will happen in 2 years, let alone “ever”.

Not this “AI”.

Easy now.. The guy just asked a question. No need to be harsh or discourage anyone. ![]()

I would say the current rule is already as much compromise as I’m willing to make here.

The wording is quite vague because it’s hard to write rules that age well when the tools evolve, and it’s easier to add more restrictions later, than to remove existing ones.

The net effect of the current rule is, essentially, no LLM generated code in Haiku, as much as we can enforce it. If someone sends a contributions where they generated some code and then reworked it until it is actually good, we would likely never know. But in code reviews we have started asking people to source their information as a way of extra checking.

As for people using LLMs in other ways, such as asking questions about the code to them, there isn’t much we can do about it by using code contribution rules. These wouldn’t be enforceable. But we can ask people so source their claims (with existing documentation, not “I asked a chatbot and it says this is how it works”) and we can educate them about the problems with LLMs.

And if future code contributions raise new problems, we can always make the rules stricter. I doubt we will relax them to allow more things.

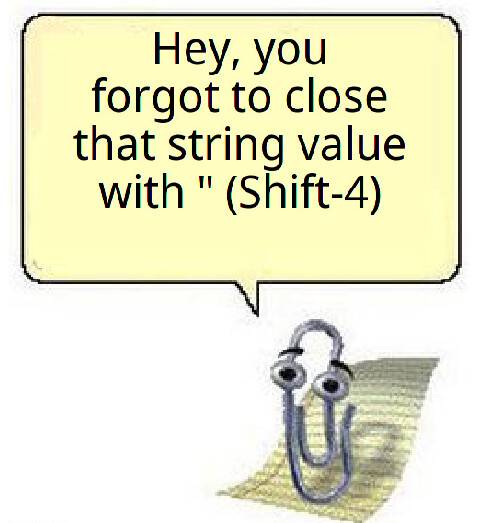

I must admit to being tempted. When I’ve spent half an hour hunting down the cause of a crash, then the thought “Can’t an AI just track down the missing end-bracket for me?” does occur. Most of my problems are like that, it’s not that I didn’t understand the concept, it’s a silly little bracket or quotation mark that my fingers missed (or doubled-up) on the keyboard.

But we need to consider why there is this massive push towards AI-assisted coding. It is because management believes it will get more code out the door, while paying salaries to fewer code monkeys. Profit! Leaving aside whether that is actually true (Hint: not necessarily), how does it apply to Haiku?

Everyone in this project, including our one remunerated coder, does it because we want to. It’s about the challenge of figuring out new things, trying, perhaps failing, but always learning. Coding with an AI takes that away from me. The AI writes the program , I’m just the QA guy, when it should be the other way round, with the program expressing my unique take on what it should be, with the AI just looking over my shoulder looking for silly mistakes.

Of course we’ve had that sort of thing for decades already, with code highlighting. It could be slightly smarter.

Perhaps one day, when Haiku is a dominant force in the computer industry, with millions of users, and we all wear suits, it will become necessary for us to revisit this. Perhaps.

What I hate about the AI trend is that you can’t just use it locally with no internet connection. The AI is always an online “service” and it spies on the user and has all kinds of trickery. This is obviously not something that belongs in an open-source OS.

Access

As long as AI runs as an app or in the browser as an optional add-on, I’m fine with it. I have used Grok a little to “vibe-code” an instruction decoder for an experimental processor in VHDL. I could use it only because I knew enough about how to properly architect an instruction set as an extended subset of WebAssembly (substituting smaller, parallel operations for monolithic single ops).

Neural Processing Unit?

Most people seem to think that an NPU can be used only by AI but that isn’t actually the case. The math functions processed by the NPU are just bag-standard linear algebra functions (aka matrix math). My most hopeful and promising use of an NPU is that of using it as a replacement for a GPU on a system-on-a-chip so that it will perform better. Nothing at all to do with AI, unless the AI was already programmed to use a GPU anyway.

Best Practices?

The best option, IMO, is that WebGPU can already access the features of a GPU from a browser using APIs similar to Apple’s Metal architecture (or so I’ve been led to believe). That means that the browser can do everything needed to implement AI independently of an operating system anyway!

Once AMD comes out with an APU that also includes an NPU, presumably to run Windows 11 on, we’ll be pretty much set! All that has to be done is to make a graphics driver accelerant that targets the NPU and on-board frame-buffer, then make sure that WebPositive has WebGPU support accessible from WebAssembly and recompile! The NPU instructions are fixed within the AMD64/x86_64 instruction set so we’ll likely never need anther graphics driver for any newer system that has the NPU and AMD integrated graphics!

I would like the ability to run local LLM models.

It’s possible with Llama.cpp but you will need a powerful computer

There are many LLMs that can be run locally: Amber, LLaMA, Gemma, MiniMax, DeepSeek, Falcon. LLM AI brings with it many different problems, but the inability to use it locally is not one of them.

I could not find any download links to any of these LLMs.

Here you go. Just takes a bit of a web search!![]()

For llama.cpp you will need to stick to a specific version and then apply some patch from 3dEyes

I’ve run it last year but it was too slow on my machine : Install and run llama.cpp | Haiku Insider

But it was working ![]()

So the way to run them locally, is to set up a web server and trick them into thinking they are online when they are not. Genius.

LLM don’t need any internet connection, but they need a specific model to run on.

Providing a web access outside your local network is just an optional service you can propose.