A few answers (this is my point of view, others may have different opinions):

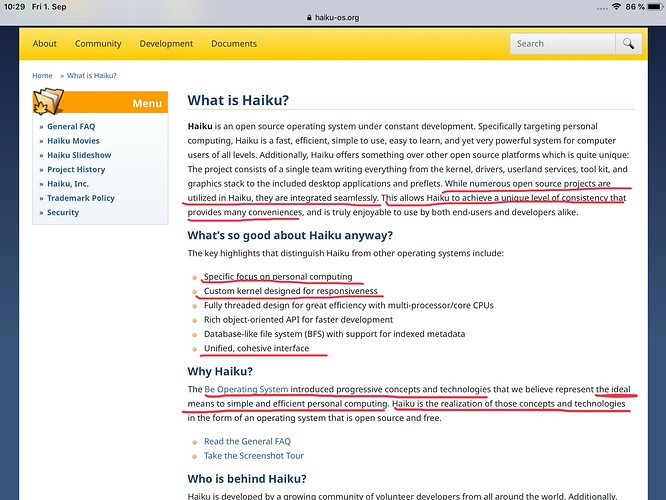

About the focus on personal computing:

Haiku tries to do only one thing: provide an operating system for personal computers. We won’t do servers, we won’t do smartphones, we won’t do game consoles or embedded systems. This means, in various areas, we can make decisions with much less compromises than other systems or platforms do.

For example, the GNOME desktop environment is trying to build a system that is usable both with a touchscreen (on, say, an iPad-like tablet), and with a keyboard/mouse setup. This results in trying to make buttons and menus a lot larger, so that you can use an imprecise pointing device (your fingers) instead of a mouse which allows to target things within a few pixels. On the other hand, Haiku makes full use of the mouse: single clicks, double clicks, multiple mouse buttons, sometimes combined with keyboard modifiers.

Likewise, the Linux kernel is, these days, mainly designed for either mobile phones (with Android) or big server systems. The desktop version is there, but is somewhat a byproduct of the two others. There is no major company that makes a profit of selling desktop Linux systems, and so there is no company that puts money or developer resources into improving that part. As a result, for example, Linux is very good at sustaining very high network speeds with low latency. It can also do embedded real-time things rather well (but not as good as other systems that do only and exclusively that, such as vxworks, qnx, rtems, freertos, …), and it can do a good job at the powersaving strategies needed for a mobile phone. But, try to run a somewhat heavy processing task such as a big compilation job on Linux, and soon enough you find your music player stuttering, your mouse lagging or even your entire UI freezing for a few seconds because of the heavy CPU use and disk operations. Fixing this would probably require a tradeoff that Linux doesn’t want to make because it would degrade their server performance. There are some kernel options to improve this, but most Linux distributions won’t use them, because they don’t bother providing a kernel that’s finetuned for a desktop system. Their main target is usually servers, with desktop systems available as a nice extra.

About the seamless integration:

It is a lot of small things. For example, you can copy and paste or drag and drop content between pretty much any two applications, and they will figure out a way to get the data transferred properly (thanks to translators). The look and feel of applications is always the same, and so are the UI conventions (button labels, keyboard shortcuts, …). On Linux, you are probably using a mix of Qt and GTK applications with their slightly different conventions. On Windows, it used to be quite good (maybe in Windows 95 days?) but not anymore, the OS itself is built on top of 4 or 5 UI toolkits (I lost count) and, as a result, there is no good definition of what a “native” apps looks like. So 3rd party applications will do their own thing. Most will not even use your standard window decorations (titlebar) anymore, and just reimplement it, each in a slightly different way. In Haiku, the titlebar, to take a specific example, is managed by the OS, and as a result, we can keep tweaking it and adding new functions to it without being worried that some apps are not using what we provide.

This integration also applies “vertically”: when we add a feature to the system, we can do so by changing any components up and down the stack. For example, when we added package management to Haiku, this was not just added as an application managing its own database of what’s installed (that is how package manager on Linux typically work, with some notable exceptions). Instead, our package manager involved changes in the file manager (to allow double clicking a package to install it, for example) all the way down to the bootloader (to allow booting a previous state of the system with older packages, similar to Windows “restoration points”). Such things are simply not possible when your operating system consists of a highly modular system. The GNOMe and KDE projects need their code to run not only on Linux, but also on FreeBSD and in some cases even on Windows. So, if they needed to change something in the operating system kernel, that’s at least 3 kernels to get changed. Coordinating something like this accross different, independently run projects will take years to coordinate. In Haiku, a single person could do it.

About the kernel responsiveness:

I have partially answered this in the first answer already. Basically it means priorizing short latency over throughput. A “non-responsive” kernel will take the inverse priority, the extreme of which would be a cooperative multitasking system. Such a system will let one task use the CPU as much as it wants, until the task decides it is done with its job, and then it will let other tasks run. In preemptive multitasking, the kernel can decide to interrupt tasks to let other tasks a chance to run more often. Butthis raises the question: how often do you interrupt tasks? And what do you run first when there are multiple tasks waiting for the CPU?

The answer depends on what you want to do. If you run a server system, most likely a lot of your tasks would be answering to network requests. In that case, the latency doesn’t matter so much: it’s OK to have the network request wait for a few dozen milliseconds, that will be comparable to the network latency (the time for the request to travel through the internet). So, your goal is to pick one network request at a time, and serve it as fast as possible, then move on to the next one.

In Haiku, since we are a desktop system, there will be many tasks going on at once, of many different types. For example, you may have a web browser showing this forum and you are writing some forum reply in it, at the same time you have a music player running in the background, and you are also compiling some sourcecode.

What do you want in this situation? You want the music to play without stuttering, meaning, the music player needs to run often enough to get data from disk, decode it, and send it to the soundcard. If the data doesn’t get to the soundcard in time, it will make ugly glitch noises, which we don’t want.

The next thing is handling the keyboard typing into the forum. You don’t want this to lag behind, ideally each keystroke should be rendered at the very next frame, but if the system is very busy, maybe you can tolerate a few more milliseconds of delay. But beyond that, it gets very annoying, and increases your number of typos or even makes it impossible to write anything correctly (we could start to miss keystrokes).

Finally, once all these higher priorities tasks are done, we can leave the remaining CPU time to the background sourcecode compilation job.

So, that’s the high level view. But how do we actually achieve it? Well, the system scheduler has the job of picking which task to run next, and when to kick running tasks out of the CPU to make space for other ones. The scheduler in Haiku will “kick” tasks out a bit more often, especially if an higher priority task needs to run.

To know which tasks are higher priority, the system is designed so that when creating a task, you have to indicate its priority, and the API provides some guidelines as to what the priority values mean (something like “display priority” for tasks involved with showing something to the user, “realtime priority” for our sound related work, “background priority” for the compiler, and so on). Applications written for Haiku will use these, and the scheduler will know what to do with them. Ported applications may or may not use these things, depending on how they were originally designed and how much effort went into porting them.

About ported applications:

I think these can’t fully benefit from all we have to offer. Personally I don’t have any of them installed on my Haiku machine currently. The people porting these applications have achieved pretty good results of making them look and feel as close as possible to the native ones, sometimes against the will of the original application developpers. It is not perfect, but it is sometimes useful to have these applications until someone decides to write a Haiku specific replacement.

About concepts and technologies:

I can think of a few things that Liunx still does not do:

- Use of filesystem attributes that are also indexed and can be used as a database to manage and locate your files. We use this for contact files, e-mail, music and video files, web browser bookmarks, and many other things.

- “Translators”, an API that standardizes encoding and decoding of files. This means an application written for BeOS in the 1990s and executing on Haiku can save and load AVIF files, a format that was designed long after these applications were written. And this comes at no effort to the application developer.

- Use of a virtual filesystem for the package management, which allows instant enabling and disabling of packages, as well as reverting to past versions of the installation in case something is broken

There are a few more in BeOS, but I think Linux did make some progress on several of them.

And finally about the NewOS kernel:

This is the kernel we based our work on, because it was similar to the BeOS kernel (but not identical). We needed to make it more identical to the BeOS one, and so we made a copy of the kernel sources that we have been modifying and evolving since. The author of NewOS, on the other hand, went on to create more modern kernels and operating systems. He did the microkernel lk, and I think he now works on the Fuchsia operating system at Google. That explains why he doesn’t develop NewOS anymore, but our kernel has so many changes from its original work anyway, that this isn’t really relevant at this point.