The situation is a lot more complicated than that.

Use AI in your personal projects if you want. I may dislike it (because of ecological reasons, ethical reasons, and because I spend more time than I’d like banning AI bots from scrapping my website, saturating my internet connection bandwidth and raising my electricity bill as my server is home-hosted).

But when it comes to submitting code to someone else’s project. The expected workflow is that you took some time to study the code, make some changes, and you put some effort to contribute. Then the people reviewing your work can ask questions, and if the code review is done properly, you can use it to share your knowledge with the developers, the developers can share their knowledge with you, and in the end, not only the code gets better, but also everyone knows the code a bit more.

This process is very important, for the person who submit new code to learn new things and make better contribution, and for the person reviewing it because they learn how the code they are about to merge works. They are, most likely, going to maintain that code in the future. So, this is how we keep the maintainers of the code understanding what we do, and also how we grow new contributors to the project.

Now imagine the person submitting the code is not the one who wrote it. The reviewer asks them questions. They don’t know, of course, because they didn’t write the code. So they try to repeat the question to the person who actually wrote the code, and copy the reply back. I think you can see how this can turn out into a huge waste of time for everyone?

Finally, take this last case and replace any one of the three people involved with an LLM. I think you can see how things can go even worse.

So, use LLMs and assistants any way you want in your own project. But keep in mind that it will not enable you to understand the code on a global scale as good as you would otherwise. Some projects may be ready to accept that, some have decided to set up high standards for the quality of contributions, and it’s unlikely that you can get there with only an LLM. You could get there with some little help from an LLM, but for one person who does that, there will be a dozen others who will blindly paste the output of the LLM into their code editor and try to submit changes. They’re in for the fame without the hard work, especially in popular projects (cURL is a very good example of this).

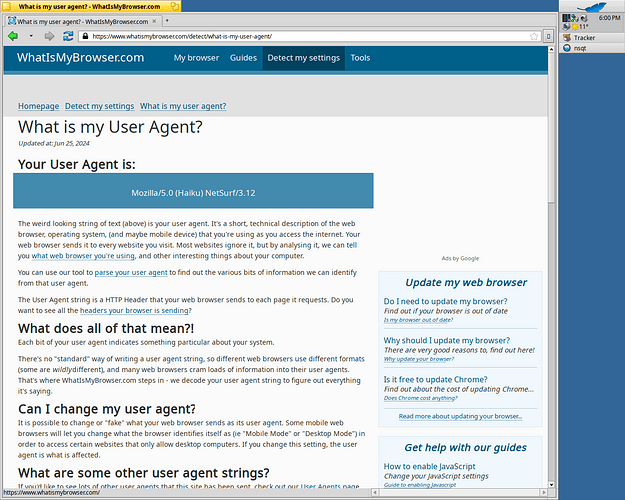

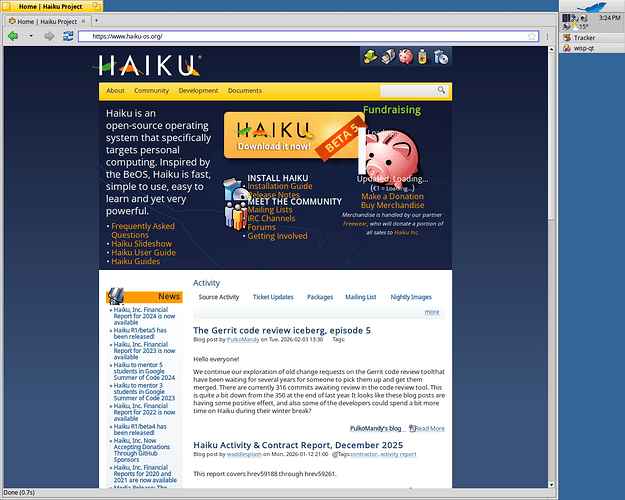

I don’t really see a solution to that except warning people that such behavior is not welcome. In Haiku we can attempt to be a little tolerant to it. But NetSurf with an even smaller team? I totally understand they don’t want to spend their time on such things. If they wanted to generate code using an LLM, they could do it themselves, and not adding a person in between them and the LLM. That will surely be more efficient.

So, in the end: do not submit someone else’s work that you don’t understand to an open source project. It will go badly. And also do not submit work that’s primarily the output of an LLM, that is similarly problematic. If you used the LLM as a kind of peer programmer and wrote or at least carefully reviewed the code yourself? Maybe you can have a try.