Hello Haiku Community,

My name is Matei, and I am a first-year Computer Science student. I am deeply interested in operating systems and have been active in various OSDev communities for several years.

After hearing about GSoC, I started browsing the participating organizations and immediately clicked on the “Operating Systems” category. I was honestly surprised to see it listed and was quite excited. I was already familiar with most of the organizations in this category, including Haiku, but I found it particularly interesting because of its focus on personal computing.

My Background and Skills

- Solid experience in C, with knowledge of C++

- Familiar with Java and C#, although I have used them less frequently in the past 2-3 years

- Knowledge of x86_64 and AArch64 assembly

- Experience with QEMU and GDB

- Experience with build systems such as Make and Meson, as well as an OS bootstrapper called Chariot

- Wrote an operating system from scratch (see below)

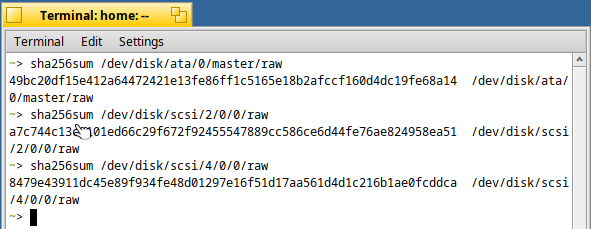

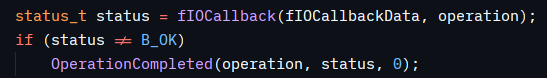

I am currently developing a hobby OS from scratch called LykOS. It currently implements basic kernel functionality such as physical and virtual memory management, a VFS with RAMFS, ELF loading, support for kernel modules, and a driver system (PS/2, AHCI, NVMe), among other features.

After reviewing this year’s GSoC ideas, the projects that interest me most (in order) are:

- Universal Flash Storage support

- Multiple monitor output in app_server

Over the next few days, I plan to explore the internals of the Haiku kernel to gain a better understanding of how its components interact.

I would love the opportunity to contribute to Haiku. I believe it would be a great learning experience for me, as it aligns perfectly with my interests. I am very eager to learn and contribute wherever I can.

A few questions:

- Which of the two projects do you think would fit my background better?

- Which parts of the codebase should I study first for each project?

- Is there a bug or feature ticket related to the area I want to contribute to that currently needs attention? I would like to work on one as my required code contribution.

Best regards, Matei