Interesting (and fun) article by Alyssa Rosenzweig:

I think apple uses some sort of “multitreading machine learning algorithm” managed by dedicated software and the AI chip to get maximum performance in its graphics processor …

So more than an impossible bug, probably some component is totally missing, and even if they managed to get some video acceleration with a good yield, it would still be of poor performance compared to the performance on macOS because of that missing piece

This insight comes to me from a video I saw a few years ago about some AI techniques to optimize performance …

I am attaching the see to the minute where it is discussed

Sheesh, the article explains what it is about… It’s not related to AU alghorythms at all.

No, the article does not explain at all what it is, he makes many attempts at reverse engineering and hypotheses, encountering boring problems one after the other and it is not clear at all how to solve them definitively, from here I hypothesized, which probably lacks a an essential component that the author of these experiments is not considering at all, obviously … and it is, since in the years preceding the release of the Apple M1 there was talk of these new optimization algorithm techniques related to machine learning, it is possible that these mysteries they fit together in something like this.

You mean she

The article explains quite precisely what the bug was, what the path to the fix was and what the ultimate fix is. I think it is quite insulting to just dismiss that work and claim that they are missing something. They fixed the bug and also showed how they fixed it. And again nothing to do with AI optimization or anything of the sort.

In late 2020, Apple debuted the M1 with Apple’s GPU architecture

So this person is working on this for 2 years, but no, obviously she ñissed the obvious point you saw in a random marketing video that’s not even about Apple hardware? Come on, why did you not write that driver before she did then?

Sorry but you are just rude and inappropriate here.

the GPU renders only part of the model and then faults.

How can this be related to artificial intelligence?

Modifying the shader and seeing where it breaks, we find the only part of the shader contributing to the bug: the amount of data interpolated per vertex.

That doesn’t look related to AI at all…

Ah-ha. Because AGX is a tiler, it requires a buffer of all per-vertex data. We fault when we use too much total per-vertex data, overflowing the buffer. …So how do we allocate a larger buffer?

Here we go, a much more reasonable explanation that doesn’t involve AI at all!

It’s prudent to observe what Apple’s Metal driver does.

Ok, finally here are the real facts and not guesses from a random unrelated video! Will she find some AI hidden in that driver?

we learn from one [WWDC presentation (Harness Apple GPUs with Metal - WWDC20 - Videos - Apple Developer): But it may cause a Partial Render if full. A Partial Render is when the GPU splits the render pass in order to flush the contents of that buffer.

Hey, the answer was in documentation from Apple since the very start. And, by the way, it does not involve AI.

She then proceeds to tweaking the Apple code to make it behave in the same way as her, quite convincingly proving that the issue is well understood. And then, of course, fixing the bug.

Guys sorry, I do not want to make controversy, and I do not even insist on this, we are in the dimension of hypotheses here I can be totally wrong, and that’s okay, how can I have a minimum of chance that I guessed right.

I am not saying that the author of the article has done some wrong things, probably, for a single core, or the single compute units are doing the right retroengineering but as oddities emerge that should not emerge, the author of the article says they are bugs, but are they really bugs?

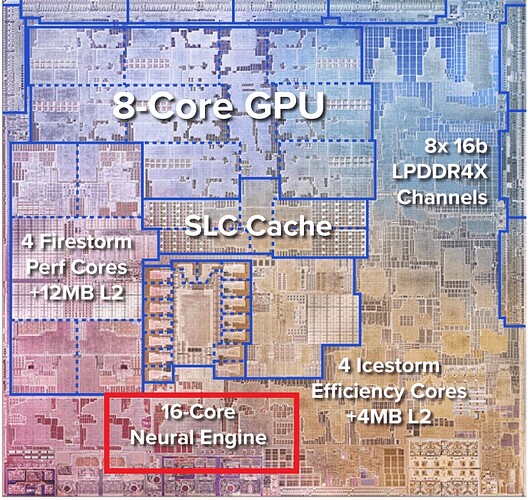

I’m just assuming, that as the M1 processor has 8 core cpu, 8 GPU cores, 128 EU (execution unit) 1024 ALU (arithmetic unit), 16 core neural engines, there is any chance that apple engineers are using their technologies in eurythstic way, and there is a good chance that they don’t make it explicit to have competitive advantages by using some algorithm to manage performance, energy efficiency etc … I don’t understand why to discard this possibility, the technologies are all here, and they have harvests to make the best use so as to have advantages.

I continue to have this belief precisely because I see that suitable techniques and technologies already exist, I have seen several courses on how machine learnign techniques operate, I have even seen that there is someone who has already developed Machine Learning Tools for Generic Compiler Optimization already usable. All turn on this way …

AutoPhase: Compiler Phase-Ordering for HLS with Deep Reinforcement Learning

Did you even read the article? She (the Reverse Engineer) describes her journey of finding the rendering problem. After trial and error, reading docs and making some assumptions, she finds the reason the bunny wouldn’t render correctly and fixes her driver.

This reverse engineering process has nothing to do with AI.

Of course I have read the article, more than once,

but this has not at all aroused my suspicions, it has instead convinced me more and more that I could be right, that they are not bugs but arcane modes of operation that are not easy to understand through attempts at reverse engineering,

It managed to overcome these strange bugs by making “fixes”, workarounds …

Assuming these GPUs work great on macOS, these CUSTOM GPU modules, they are not powerVR, it suspects that they are powerVR, probably Apple, to maintain a certain level of compatibility with macOS apps and their internal architectures has adapted these proprietary GPU modules in a similar way to how a powerVR works, what I’m doing, is a kind of reverse engineering based on news information, with IMAGINATION - powerVR, Apple is no longer commercial relations since 2017 even before the release of the M1

It is also likely that apple has hired some ex imagination engineer for its own benefit to build its own custom gpu

I repeat, I could be totally wrong here, but from the information I have in my hand from my point of view, and not from an explicitly technical point of view, I remain convinced of my intuitions.

It works with the snippet loaded and doesn’t without it.

Clearly it’s a completely unrelated mathematicam concept at work.

You can’t just ignore hardware inner workings and claim “its AI”

Ai simply is /not/ fast, it can help you to make some things faster but that is more optimizing the code beforehand which you pass to metal, it makes no sense for metal to work “with AI”

Not to mention that you showed the PCB above, and the GPU and neural engine are very clearly on other sides of the chip (and the neural chip is about half the size)

Why do you think that this considerably small segment on the other sise of the chip magically influences the much bigger chip actually used in a very basic operation (dealing with a buffer overflow)?

It would be interesting to compare performance of these gpu drivers for linux, with the 3D graphics performance of macos, this would silence me definitively, or not …

I mean, if the linux drivers are inferior but of acceptable performance in a comparison between the two systems, then I am wrong, but if the performance is vastly better, dozens of times in favor of macos, then there is some chance that I have guessed well.

(also taking into account energy consumption)

“Neural Engine” is just PR marketing term and have nothing common with how real human brain neurons works. It accelerate some kind of compute tasks often used in machine learning, but can be also used in other tasks such as scientific numerical computations.

Someone has spent two years reverse engineering the hardware and writing a driver for it and they found nothing. You looked at two youtube videos and found a screenshot showing an unrelated part of the hardware and are doing wild guesses based on it. Who is more likely to be right?

Also, my Intel CPU has a “neural engine” too. But it is not used by the graphics driver, it is a completely independant piece of hardware, Intel provides an SDK for it and I can use it if I want to run some AI stuff. It is not used by the graphics part of the hardware or by the graphics driver.

As you have seen, because you have linked some software that allows to use such engine, these “AI” cores are available to the user of the hardware to do whatever they want with it. That would not be possible if the hardware was already being used by the 3D acceleration to display things on screen.

Also I don’t see what they would be doing with artifical intelligence here. You seem to treat it as if it was some kind of magic solution to every possible problem? “My computer is slow, AI will fix it”? That is not how AI works.

From how I put it, not being able to make explicit the amount of time I dedicate to these personal researches, I did what I could, I put a couple of videos (one a seminar, and the other of forecasts of technological evolution that after a few years is already something obsolete) which seemed to me the most explanatory in describing where technological evolution has really gone today, especially with the adoption of deep learning thecniques, a quantum leap.

You are right, it is little, and my intuition may seem like a kind of presumption, but please take a look at some videos of papers presented on this channel regarding deep learning, which I respect is in exponential evolution, that is to say that every 6 months progress is doubling.

Two Minute Papers

And finally, if you have time, just to realize some progress “on silicon” “on hardware”, also in this case, I do not want to appear presumptuous, but I have the feeling that you are not clear where we are arriving, beyond the classics " systems "

Anastasi In Tech, Hardware & Chips & AI

I really ask you to take a look at it, and I hope to be able to appear less presumptuous after you have been able to guess what is to come in terms of progress in these areas, I do this kind of studies and research for passion, many times a week, and it is likely that the information that for me is acquired and taken for granted for others sounds a little too fictional…

However, I remain curious about the question I asked in my previous comment, whether I am right or wrong, that macos 3D graphics are probably dozens of times more energy and performance efficient…

I have known and admired author of the article Alyssa Rosenzweig for years, she is the same one who created the drivers for linux gpu Mali for ARM, Panfrost and Lima, years ago I was delighted to be able to unlock and install Armbian on cheap tvboxes with 3d acceleration thanks to these drivers … here you recognize me as a user in trying to make it go for the best together with other armbian on Rockchip RK322X … but in this case of this driver for the M1 gpu , I repeat without presumption I am still convinced that apple from M1 onwards is using its deep learning modules in some way to optimize performance and energy efficiency, and until I see the M1’s graphics acceleration and energy efficiency on linux at levels comparable enough, I don’t say higher, to prove to myself that I’m wrong on that intuition, I won’t change idea.

None of these specifically reference the M1, though. As long as there is no evidence that Apple uses the Neural Engine in conjunction with the GPU or for performance/energy efficiency, this is all closer to conjecture than presumption.

For the record, it is still very possible for Linux and other OSes to never really approach macOS with regards to performance and energy efficiency even if the latter doesn’t utilise the Neutral Engine for any of those. The M1 and macOS were designed with each other in mind, after all. And obviously, Apple engineers would naturally know more about the M1 than anyone else since they made it and macOS too.

I also don’t think that it makes much sense to extensively use NPUs just to improve general system performance and energy efficiency. Dedicated ML/NN workloads need those hardware cores more and an easier way to speed up a system and/or make it more efficient is to just add more general-purpose cores, so that either operations are completed faster to get back to idle as soon as possible or operations are completed within reasonable timeframes at lower clockspeeds to save energy.

Also covered on Hackaday now.

Again, it has nothing to do with any AI.

well it will be interesting to see what happens in the future … maybe Alissa will realize that there is some possibility that apple is using its “tensor cores” to optimize performance and energy efficiency, maybe she will realize this when she sees (if I’m right ) that there is too great a distance difference in performance and energy optimization not to understand that apple is using “some such trick” and at that point maybe it will start studying how deep neural optimizations work and implement them, and in doing so it will succeed to implement them for any GPU or SOC or CPU that has neural cores …

NVIDIA has been implementing neural cores in its gpu for years, well before apple in the M1, Intel and AMD ditto, are heading towards this path, why do you think they are doing it? do you really believe that the engineers of Apple, when they decided to bring out the M1 in an aggressive way compared to the competition, did not have the intuition to use these technologies in symbiosis?

well come on, I’ll make it explicit because I’m sure apple is using its tensorcore to significantly improve performance …

it is just an example, in blender now that METAL is recently officially implemented, it is evident, and more or less the performances are similar to Nvidia gpu with exploited CUDA and OPTIX …

METAL, it is obvious that in mac os, it is used on all applications, even without the developers making “explicit use” of it

You’re like a dog with a bone, aren’t you? You just can’t let go.

It doesn’t even matter what happens in th Apple chips. The article and the OP is about the reverse engineering an the driver. And you saying you followed her and her work… come on, in a posting before that you said ‘he’ instead of ‘she’… makes your story really believable. Not.

Anyway, I’m done in this thread. Good luck.