A kernel thread will, yes.

It’s a matter of simplicity.

You could build things with a single thread or a thread pool and then have something decide how to schedule window repaint events to run into these threads. But, why not just start a thread for each window? Then you can let the already existing system scheduler schedule the threads, and no extra work is needed.

Less code is written, and the performance cost is not that high (after all, this works as you can see in Haiku).

However, the environment changes. For example, new CPUs have more registers, which makes context switches take more time (because you have more registers to backup and restore). Context switches from an app to another are more costly (you need to change the memory map, and possibly clear some of the CPU caches), and when you trigger a context switch, you never know what’s going to be rescheduled.

However, we also get a lot more cores and each core has “hardware” threads (basically, two sets of registers that are plugged to the same CPU). This means you can schedule multiple threads at the same time on each CPU core. As a result, there are less context switches, and this compensates the extra cost of switching. So, eventually the “lots of threads” approach continues to work.

… looks like app == process here. As a grey bearded old UNIX guy, this discussion has its somewhat humorous aspect. It’s commonplace in that world to put an “application” together out of a bunch of utilities run by a shell script, possibly running concurrently connected by “pipe” devices. So not only do you have multiple processes complete with their own virtual memory maps, you’re going through the whole execution process as many times as necessary for possibly somewhat trivial tasks like “does a file with this name exist” or “extract the second word from this string”. Some of those faculties were added to the shell itself eventually, but not when we were running on Motorola 68030s at 16Mhz and 14Mb RAM. Was not a problem. You have to know what to optimize.

Thanks for the reasoning about the threads.

I have the knowledge about the inners of all this at low level (comp eng here), but lacked the haiku dev point of view (and probably a few more things).

I know it may depend on the use case of the applications, but i would like to do myself a test of a Bapplication as it is now with threads and a per application scheduler thread that deliver to the windows not in threads to compare how that works.

Of course, this may be overengineering with current hardware and not gain much resourcewise.

Guess i can clone the current sourcecode and do it someday

Okay. So let me review everything I’ve gathered so far.

Haiku has servers which wait for incoming requests from teams and process them, rather than being ‘assigned’ to applications. ProcessController allows one to see things by ‘team’ first, and subdivides into threads. And users can adjust what threads get the most time personally by setting a different priority/affinity, and it changes it; but I can see where this could easily break the running session! If correct, then this should be considered more of a developer’s tool/toy than something to tell a user to play with freely.

Every application window drawn on the Haiku desktop gets its own thread, and so does each task given to applications (like, say, if: Tracker is emptying the trash while copying, while Web+ fetches pages, while StyledEdit saves a document, etc.), which allows Haiku to have each application execute all the above operations simultaneously – but yet, also does so in turn (sort of in a queue of threads), without adversely affecting the performance of other teams and/or applications. And things can happen live, like changing values in an application. Is all this right?

Is the power of being able to rapidly switch between threads what makes Haiku unique in and of itself? Is what I just wrote the main point of it a regular user could read and understand about Haiku?

I sincerely ask all this as I am familiar with apps on the Mac, and am curious what makes a multi-threaded application on Haiku truly unique. (For those thinking ‘man, this guy is an idiot’, please note I had tried Haiku first, then got interested in BeOS from there, so I’m not as knowledgeable on the internals of the OS as the Be/Haiku veterans and power users here who have naturally gone from Be to Haiku, despite me having tried to read the BeOS Bible.) Personally, (again, from what I’ve learned so far), I’m beginning to think in the 1990s Be had a huge advantage, back when tasks were single threaded or weren’t fully multi-threaded, but now, I presume today’s GCD-enabled applications are roughly equivalent in terms of performance, just on a more bloated system below than Haiku (forgetting the layers of mess on the X.org DEs, as I’m talking macOS, not Ubuntu, etc. in this case). Am I erroneous in presuming this?

Again, I want to be sure I get everything on this topic totally right, be fair and unbiased, and also make sure I’m not misunderstanding this before publishing it in an article. Thanks for your comments/help with this (and patience), everyone. This is definitely teaching me more about Haiku. And I think I still have a lot to learn!

That second part is more a matter of application design. You’ll do something in a separate thread if you need to, but at the cost of breaking down the procedure around that task, so it’s comparatively rare to do that. (I take you to mean, the I/O performed within an application executes in separate threads, but “each task given to applications” is ambiguous.)

Not at all unique, roughly every OS has threads and switches rapidly between them. There may not be anything about threads that a user needs to know - they don’t make Haiku operate any different from a user’s perspective. Programmers certainly need to understand how it works, not users. Users may certainly be interested to know that Haiku somewhat distinctively uses threads in its UI, and provides a programming environment with particularly convenient support for thread programming, I’m just saying it’s somewhat academic if you aren’t going to write an application yourself.

Not just windows which are drawn. All Windows in the BeOS design have threads. For example, WebPositive has a “Settings” window that you can open, so whenever WebPositive is running there’s a thread named “w>Settings” owned by the WebPositive team. This thread would be responsible for any events to do with that Settings window. Even if you never use it, the thread just sits there idle and is cleaned up when WebPositive exits. The same is true for other Windows in WebPositive like the Save window or Downloads list.

For windows this is just built-in to BeOS (and thus Haiku). For anything else, such as the examples you gave like emptying the trash, copying files, or saving documents, the application programmer needs to make specific arrangements to create a separate thread for each separate thing they want happening “at once”.

I don’t know anything specifically about what WebPositive is up to there, but at a guess, it may be invoking BWindow::Hide() or something to the same effect. If you just write a simple application that opens a window or two, I think you’ll see the threads only after the windows appear. Otherwise we’d be limited to only a predetermined set of windows per application, but actually a single application can open windows as long as there’s system resources for them. Ordinarily the thread is started if necessary by BWindow::Show().

That’s correct. Web+ needs to keep the window live at all times for simplicity, because it can eg. update a download status while the downloads window is not visible. So it will just keep them hidden.

On threads, as I think I wrote earlier, there is nothing special about what we do. Many other OS have support for threads. The difference is that our API either forces devs, or makes it much easier for them, to use threads. And there are also some hints for example on which priority to set for each thread. This just makes sure the feature is used and used correctly. You could do the same on any other system.

Okay, I may not be the best reviewer in the world, but I promised I’d put this article out there… and I did. So, the article I’ve been saving, I’ve finally published on my blog as “What makes BeOS and Haiku unique” and hope to God (literally) that I got everything in it right.

I liked the article and found it correct to what i experienced , but the link is a not found result for me. Used the blog menu to read it.

Thanks!  Which link is broken so I can fix it?

Which link is broken so I can fix it?

You give us 2018/10/22/what-makes-beos-and-haiku-unique/

What works is 2018/11/30/what-makes-beos-and-haiku-unique/

It’s nice work; crediting Haiku performance to threads may not be very well founded, as discussed earlier here, but put at the end like that, there’s little harm in it.

The only thing that seemed weird to me was the business about read-only directories - referring to the package filesystem, which does support only read-only access via standard filesystem operations, but … wasn’t really clear what you thought was interesting about that.

Thanks! I’ve added in the updated link for 11/30; also, I’ve added in a section about windowing (I forgot about that), read-only folders is now part of the PackageFS point, and I’ve also slightly modified the part about threads to not credit Haiku’s performance to them, per the advice from @donn.

Okay, with that done, I gotta finish my long-overdue Beta 1 review!

Nice read, learned a thing or two

The link to your article was published on Hackernews: https://news.ycombinator.com/item?id=18583722

Oh wow; never knew it’d be read this much! Coolness!  Thanks for the share!

Thanks for the share!

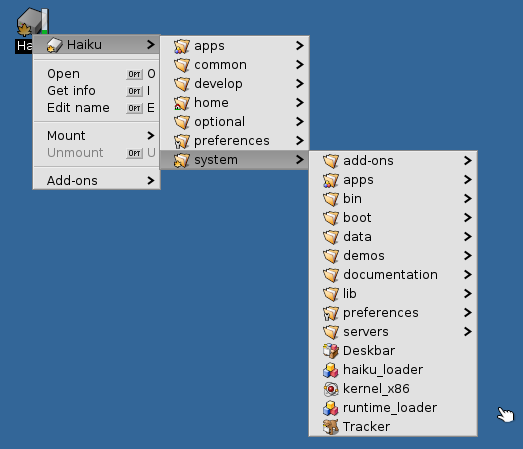

Added edit: Just wanted to mention that one thing I had forgot to mention at first, and it has now been added, is the drill-down folder navigation feature in BeOS and Haiku.

While I have used threads with windows, the main advantage to me is thinking how a program/method works and see it I can break the problem down to multiple threads for a boost in speed.

Most programs do not break down, but the time I make it work I see a huge speed-up in processing. Usually by using 4 or 8 threads I can see a 3-8 times speedup in my code. But in one special case by using 256 threads I was able to speed up a program over 30 times on a I7 CPU with 4 cores and hyper-threading because of the way message passing gave the processing short-cuts…

I would love a link to that code. Is it on Github anywhere?

That’s bound to be a corner case anyway.

If you have the feeling of needing some pack of workers doing the same work at the same time, or some work in parallell, then you might think on threading.

But for small tasks, my bet is that a event queue with workers would fit better (asuming you have a method of answering to the requester by some tag, or direct connection with the worker).