I tried to capture screen with DMA engine and get significant improvement:

memcpy

5.27151 FPS

CopyDma

91.7797 FPS

CopyDma (high clock settings)

524.651 FPS

DMA engine can be used for older GPUs and it don’t need Vulkan or OpenGL drivers.

I tried to capture screen with DMA engine and get significant improvement:

memcpy

5.27151 FPS

CopyDma

91.7797 FPS

CopyDma (high clock settings)

524.651 FPS

DMA engine can be used for older GPUs and it don’t need Vulkan or OpenGL drivers.

Thats amazing and niiiiiiiiiiiiiice!

Thank you for think about old hardware users !

More people wrote as they install probing Haiku on older HW from the shelves. Me myself also have old laptops and one-two P4 boards to take a try on them.

Thanks.

Big congrats and thanks to X512 for their amazing work getting Radeon GPUs working under Haiku!

So these cards are partly supported are they?

libdrm_radeon: Radeon HD 2000-series to Radeon HD 7000-series

libdrm_amdgpu: Radeon HD 7700-series to Radeon RX 6000-series (RX 6800(M))

Is the support in the latest nightly builds or is has this new radeon code not been merged in the Haiku repo yet?

Is there any hope for getting hardware accelerated video decoding (of h264/h265) working under mpv eventually?

No.

Not yet done.

Possibly.

I updated Mesa RADV to latest upstream Git version. Latest Zink is not working so old one is currently used. For new RADV driver libdrm Syncobj API become mandatory and I implemented initial support for it. Syncobj support is incomplete so old buffer competion waiting logic is restored.

I did some bugfixes. In case of GPU display output is used, GPU page faults occured. HDP flush was required for page tables located in VRAM. I also fixed a bug in SA Domains request queue logic that caused random crashes. After fixes test application run stable for night. No page faults, crashes, freezes or interrupt delivery problems.

I also fixed and simplified VideoStreams VideoConsumer logic so buffer flipping works correcly for all tests now (VideoFileProducer, TestProducer, Compositor).

I implemented hadware cursor support. Current GPU supports hardware 32 bit RGBA cursors with maximum size 64x64.

Next tasks:

Implementing GPU buffer sharing between processes and intergating them with VideoStreams, including VRAM buffers.

Compositor need to be modified to support hardware compositing in VRAM.

VideoStreams copy present mode need to be implemented to be used in app_server back buffer.

Design of new accelerant API and corresponding AccelerantHWInterface in app_server. app_server can support both for easier migration.

Tasks that can be done later:

Proper implementation of Syncobj.

Proper implementation of process GPU virtual address space assignment. Currently it works only if only one process is executing command buffer at the same time.

Proper initialization, finalization and GPU reset.

Proper collection of GPU information. Currently dump of Linux driver output is used in some places.

Implement GPU handling that depends on GPU version and generation.

Fix latest Zink.

Fix HDMI modeseting (need Linux drivers AtomBIOS calls tracing). Currently mode set by UEFI GOP is used.

Implement Vulkan WSI layer add-on for VideoStreams VideoProducer (VkProducer).

Implement Mesa OpenGL integration for VideoStreams VideoProducer (GLProducer).

Fantastic progress.

I ended up getting a Radeon based laptop specifically due to your driver efforts (has an integrated vega 8, as well as a discrete rx6600m navi 2).

I’ll also send an email to AMD themselves mentioning that the reason I chose an AMD GPU is due to the efforts of a Haiku open source developer and AMD support for open drivers. Let them know about your work, and that their open practices resulted in monetary awards. Maybe encourage them to send you some sample hardware.

PS. (Off topic) got the HP Omen 16 AMD advantage edition, need to log issue with nvme driver preventing boot to desktop.

@Zenja Nice. Can we see what Laptop it exactly is?

Sorry for being off topic.

Filed a crash report

https://dev.haiku-os.org/ticket/17484

Super nice laptop!!! Thank you for sharing.

Iirc there is a api in

Development for vulkan for radv and video decoding/encoding

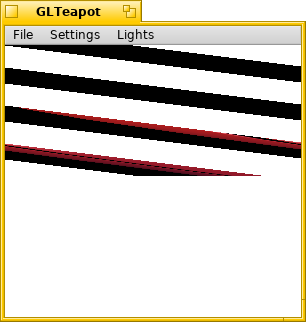

I managed to run latest Zink from Git repository. Latest Zink version require pipe_context that is currently not used in HGL. Currently something is wrong with stride.

But Blender 3.0.0 is working fine. GLTeapon is very sophisticated application not comparable with so simple Blender

Nice. Would be interesting to see how far my game engine gets before getting shot down. Just out of curiosity. Not expecting rocket results here at all

But with haiku and hardware 3D it’s rockets all the way to the mooon!!

That’s a teapot on steroids!

I get similar results with llvmpipe IIRC, I don’t think it renders as many frames as it can.

I got over 900 FPS with sw render, so it definetely doesnt use all the available horsepower yet.

Look at how much better it renders the teapot, polygon count has to be 10x over typical haiku demo